Understanding statistical power is essential if you want to avoid wasting your time in psychology. The power of an experiment is its sensitivity – the likelihood that, if the effect tested for is real, your experiment will be able to detect it.

Statistical power is determined by the type of statistical test you are doing, the number of people you test and the effect size. The effect size is, in turn, determined by the reliability of the thing you are measuring, and how much it is pushed around by whatever you are manipulating.

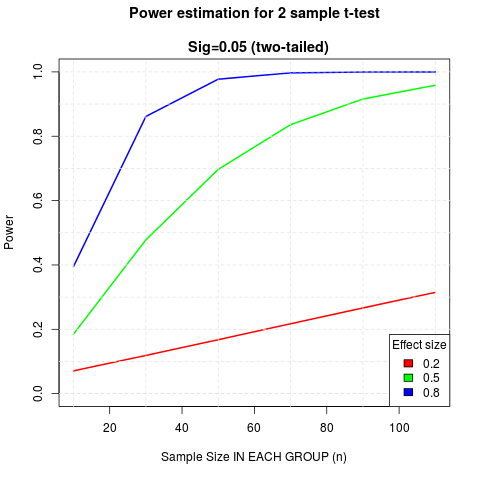

Since it is a common test, I’ve been doing a power analysis for a two-sample (two-sided) t-test, for small, medium and large effects (as conventionally defined). The results should worry you.

This graph shows you how many people you need in each group for your test to have 80% power (a standard desirable level of power – meaning that if your effect is real you’ve an 80% chance of detecting it).

Things to note:

- even for a large (0.8) effect you need close to 30 people (total n = 60) to have 80% power

- for a medium effect (0.5) this is more like 70 people (total n = 140)

- the required sample size increases drammatically as effect size drops

- for small effects, the sample required for 80% is around 400 in each group (total n = 800).

What this means is that if you don’t have a large effect, studies with between groups analysis and an n of less than 60 aren’t worth running. Even if you are studying a real phenomenon you aren’t using a statistical lens with enough sensitivity to be able to tell. You’ll get to the end and won’t know if the phenomenon you are looking for isn’t real or if you just got unlucky with who you tested.

Implications for anyone planning an experiment:

- Is your effect very strong? If so, you may rely on a smaller sample (For illustrative purposes the effect size of male-female heigh difference is ~1.7, so large enough to detect with small sample. But if your effect is this obvious, why do you need an experiment?)

- You really should prefer within-sample analysis, whenever possible (power analysis of this left as an exercise)

- You can get away with smaller samples if you make your measure more reliable, or if you make your manipulation more impactful. Both of these will increase your effect size, the first by narrowing the variance within each group, the second by increasing the distance between them

Technical note: I did this cribbing code from Rob Kabacoff’s helpful page on power analysis. Code for the graph shown here is here. I use and recommend Rstudio.

Cross-posted from www.tomstafford.staff.shef.ac.uk where I irregularly blog things I think will be useful for undergraduate Psychology students.

Within samples analysis don’t always increase power (if you subtract baseline rather than controlling for it) http://datacolada.org/2015/06/22/39-power-naps-when-do-within-subject-comparisons-help-vs-hurt-yes-hurt-power/

I’ve always liked this graphic from Andrew Gelman on “power = .06”.

http://andrewgelman.com/2014/11/17/power-06-looks-like-get-used/

The scary thing is that, in small studies, if you DO find a result, your point estimate is almost certainly an over-estimate of the actual effect (see the “exaggeration ratio” part of the linked paper on the Gelman post).

Just between you and me: small sample experiments are like doing empiricism with the jake leg: https://mindhacks.com/2011/06/30/the-ginger-jake-poisonings/

I was actually gonna post the link to that Simonsohn post, but since you beat me to it, this just became an excuse to say thanks again for teaching me what the jake leg was.