A documentary on the trauma of war, banned by the US government for more than 30 years, has found its way onto YouTube as a freely viewable video.

A documentary on the trauma of war, banned by the US government for more than 30 years, has found its way onto YouTube as a freely viewable video.

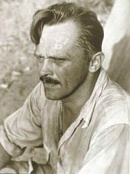

During World War Two, legendary director John Huston, then a fresh face in Hollywood, was commissioned to make three propaganda films for the US Army.

The third film, Let There Be Light, was made in 1946 – just as the war ended – and focussed on the psychiatric treatment of soldiers traumatised in combat.

This is a description from the fantastic book The Empire of Trauma:

With no political agenda, and anxious to keep scrupulously to the task he had been given, Huston applied to the letter the principle of objectivity he had followed in the two previous documentaries. For more than three months, he filmed the daily life of former combatants hospitalized at Mason General, a military hospital on Long Island. The courage and sense of sacrifice of these men was clearly portrayed, as the Pentagon had clearly requested. But equally apparent was the fact that some of them were utterly destroyed: their fear, their shame, and their tears showed clearly, as did their contempt for military authorities. The film also documented the arrogance and harshness of the psychiatrists and brutality of some of their therapeutic methods. Remarkably, when the film received its world premiere at the Cannes Film Festival in 1981, the emotional response of the viewers and critics was muted, for the film did not meet the expectations of an audience seeking revelations about the military and medical practices of the time.

What made the film so controversial in 1946, made it commonplace in 1981. But this was nothing to do with film-making, and instead concerned the way it portrayed the effects of trauma.

Let There Be Light portrays the “emotionally damaged” soldier as an everyday person “forced beyond the limit of human endurance”. “Every man”, it says, “has his breaking point”.

This is the modern view of trauma, widely accepted in psychiatry and in today’s media narratives, and is itself somewhat of a simplification of what we actually know about how people react to extreme events.

But in 1946, and especially in military psychiatry, the most widely accepted view was that soldiers who became mentally ill were psychologically weak or malingering.

The fact that film showed US Soldiers, not as the glorified heroes the public wanted, but as disabled veterans, meant that the film would be a huge propaganda disaster – likely compounded by the fact that most people saw these conditions as character flaws or shameful faking.

The idea that these were ordinary men who had been through extraordinary circumstances was just too far ahead of its time to seem realistic.

And this is why it was censored, for 35 years, until it had its first public showing in 1981, when it seemed nothing more than a passé propaganda film that just reflected what we all assumed was always the case, but actually, never was.

Link to film on YouTube

Link to downloadable version on Internet Archive.

The Observer has an

The Observer has an

An amazing description of how sociologists who wanted to do field studies in Belfast during the height of

An amazing description of how sociologists who wanted to do field studies in Belfast during the height of  In a

In a

Oxford neuropsychologist

Oxford neuropsychologist  How long is a severed head conscious for? The question has troubled students of the human body for centuries and generated countless, possibly mythical stories. History of medicine blog The Chirurgeon’s Apprentice has finally looked through the records to

How long is a severed head conscious for? The question has troubled students of the human body for centuries and generated countless, possibly mythical stories. History of medicine blog The Chirurgeon’s Apprentice has finally looked through the records to

The New York Times

The New York Times