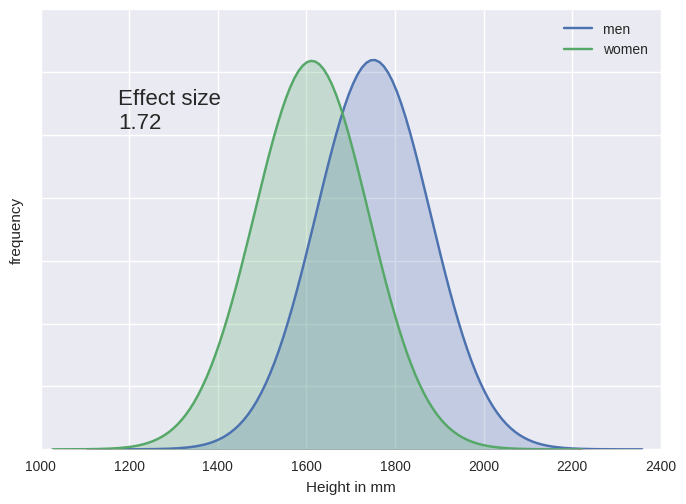

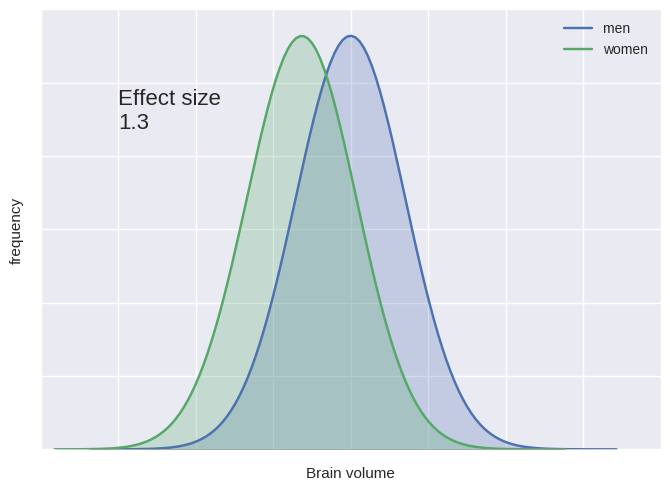

There is a popular notion that men and women are very different in their cognitive abilities. The evidence for this may be weaker than you expect. Janet Hyde advances what she calls the ‘gender similarities hypothesis‘, ‘which holds that males and females are similar on most, but not all, psychological variables’. In a 2016 review she states:

There is a popular notion that men and women are very different in their cognitive abilities. The evidence for this may be weaker than you expect. Janet Hyde advances what she calls the ‘gender similarities hypothesis‘, ‘which holds that males and females are similar on most, but not all, psychological variables’. In a 2016 review she states:

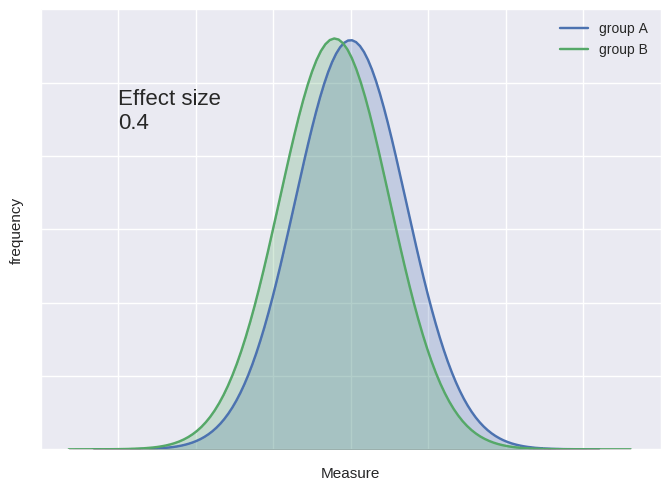

According to meta-analyses, however, among both children and adults, females perform equally to males on mathematics assessments. The gender difference in verbal skills is small and varies depending on the type of skill assessed (e.g., vocabulary, essay writing). The gender difference in 3D mental rotation shows a moderate advantage for males.

So from three celebrated examples of differences in ability only two actually show a moderate gender difference. Other abilities show no or negligible gender differences, Hyde concludes. Gender differences in ability may be overinflated in the popular imagination.

Worth noting is that the name of the game here isn’t to find gender differences in behaviour. That’s too easy. Women wear more make-up for example, men are more likely to wear trousers. The game is to find a measure which reflects some more fundamental aspect of mental capacity. Hence the focus on vocabulary size, mental rotation ability, maths ability and the like. These may be less subject to the vagaries of exactly what is expected of each gender, but that’s a shaky assumption. Indeed, it would be weird if different roles and expectations for men vs women didn’t produce different motivations and opportunities for practice of cognitive abilities such as these.

The real challenge is to find immutable gender differences, or to track differences in how abilities develop under different conditions. Without this evidence, we’re not going to be sure which gender differences are immutable, and which are contingent on the specific psychological history of particular men and particular women living in our particular societies.

One way of addressing this challenge is to look at how gender differences change across different socities, or across time as society changes. A 2014 study, ‘The changing face of cognitive gender differences in Europe‘ did just that, showing that less gender-restricted educational opportunities tended to decrease some gender differences but not others. In other words, increasing equality in educational attainment magnified some differences between the sexes.

You can read my take on this in this piece for The Conversation : Are women and men forever destined to think differently?

The Gender Similarities Hypothesis: Hyde, J. S. (2005). The gender similarities hypothesis. American psychologist, 60(6), 581-592

2016 update: Hyde, J. S. (2016). Sex and cognition: gender and cognitive functions. Current opinion in neurobiology, 38, 53-56.

Previously: Gender brain blogging: Sex differences in brain size, no male and female brain types.

The brilliant developmental neuropsychologist Annette Karmiloff-Smith has

The brilliant developmental neuropsychologist Annette Karmiloff-Smith has

The Hidden Persuaders project has

The Hidden Persuaders project has

I just stumbled across a fascinating 2002

I just stumbled across a fascinating 2002