The UK government’s use of psychology has suddenly become controversial. They have promised to put psychologists into job centres “to provide integrated employment and mental health support to claimants with common mental health conditions” but with the potential threat of having assistance removed if people do not attend treatment.

The UK government’s use of psychology has suddenly become controversial. They have promised to put psychologists into job centres “to provide integrated employment and mental health support to claimants with common mental health conditions” but with the potential threat of having assistance removed if people do not attend treatment.

It has been criticised as ‘treating unemployment as a mental problem’ or an attempt to ‘psychologically reprogramme the unemployed’ and has triggered an upcoming march on a London job centre.

Will Davies is a political scientist and the author of the new book The Happiness Industry that looks at the history and practice of positive psychology as government and ‘well-being’ as a way of managing people.

We caught up with him to get some background on the recent controversy.

Is this use of psychology in social policy a quick fix or part of a broader trend?

There is a long history of using psychological techniques in order to encourage work or boost productivity. In my book, I trace this right back to the 1920s, when industrial psychologists first started to study the attitudes and emotions of people in the workplace, with a view to understanding how people could be more committed to work. Some of this was born out of a fear of socialism or trade union organising, i.e. that unhappy workers might rebel against business in some way.

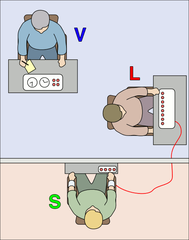

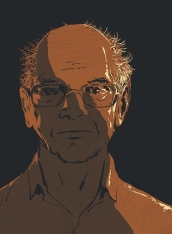

But I also think something shifted fundamentally in the 1990s, as economists started to look at psychological survey data, and the field of ‘happiness economics’ took off. Economists were struggling to understand why unemployment sometimes remained high, even during times of economic growth. And one thing they began to realise was that unemployment causes types of psychological harm (namely depression) that can leave people unable to work, or unable to seek work. From an economist’s perspective, it stands to reason that the efficient course of action would therefore be to design a policy instrument that could alleviate this psychological problem. This is exactly what Richard Layard believed he had found, when he met the psychologist David Clark, who preached the virtues of Cognitive Behavioral Therapy (CBT) to him.

Layard studied the evidence on CBT in the mid-2000s, and quickly put together a ‘business case’ (of the sort the Treasury needs to see, if it is to endorse any new public spending) for why it was an efficient use of public money, given its apparent success in getting people off benefits of various kinds. Of course, this strongly economistic approach to psychology also has various risks attached to it, one of which is that everything becomes viewed in a highly instrumentalised way, which is precisely what there is now a backlash against.

Layard studied the evidence on CBT in the mid-2000s, and quickly put together a ‘business case’ (of the sort the Treasury needs to see, if it is to endorse any new public spending) for why it was an efficient use of public money, given its apparent success in getting people off benefits of various kinds. Of course, this strongly economistic approach to psychology also has various risks attached to it, one of which is that everything becomes viewed in a highly instrumentalised way, which is precisely what there is now a backlash against.

A lot of the protests have centred on the idea that unemployed people might be coerced into psychological treatment with threats of having their benefits removed if they don’t attend but all over the world companies and individuals are voluntarily signing up to ‘happiness technologies’ that claim to be able to monitor and improve people’s contentment. Taking the coercive aspect away, isn’t this is a positive development in terms of also valuing people as emotional beings – rather than simply cogs in an economic system?

The problem here is that ‘happiness’ is becoming conceived in a heavily reductionist way. There tend to be two main types of reduction at play here.

Firstly, ‘happiness’ is viewed in roughly the way that neo-classical economists have viewed it, as the driver of consumer choices. Happiness economists may well be interested in broader notions of flourishing or life satisfaction than this, but the market research world has become fixated on positive emotions purely in the hope that they can be targeted by advertising or branding campaigns. Since the late 1990s, with the influence of neuroscientist Antonio Damasio, ’emotions’ have been the hottest research topic in the world of market research.

Secondly, ‘happiness’ is viewed in some biological, most often neurological, sense, as a physical occurence in the body. The claim that it’s now possible to see emotions via fMRI or physical symptoms (such as muscular reflexes or pulse rate) is no doubt grounded in credible scientific research, but before long, you reach the point where experts are speaking about emotions in ways that entirely bi-passes the voice of the person who is experiencing them. Philosophically, this is nonsense, for the simple reason that words like ‘happiness’ or ‘sadness’ can only make sense, to the extent that we can both witness them in others and describe them in ourselves. Behaviorist approaches to emotion ignore this.

Put these two agendas together, and you have an emerging industry of psychological surveillance, which purports to collect objective data about our feelings, and then commercialises it. The way in which digital health companies and technologies (such as wearables) are also offering consumer research or HR services is indicative of this new fusion between economic and physiological methods. All the while, our everyday articulations of ‘happiness’, ‘anger’, ‘joy’ or ‘despair’ are being ignored as ‘unscientific’. Businesses and policy-makers are so obsessed with tracking and measuring emotion, that they’re losing the capacity to listen to and understand it.

Of course, a lot of wellbeing data is collected in less clandestine, more analogue ways than this. Surveys are still the main basis for the field of happiness economics and ‘national wellbeing’ indicators. But this could change over time. One of the slightly perturbing trends amidst all of this is that a lot of this data collection is happening ostensibly for our own benefit, and yet it still happens without us necessarily granting permission. It’s not typically malicious or punitive surveillance (in an Orwellian sense), yet there’s still something creepy about it. Several of the companies above (including Affectiva) were founded to serve medical needs, but then subtly shifted towards more business-oriented applications, once they received venture capital. They start with the goal of increasing wellbeing… but gradually shift to the goal of maximising profit. This is a trend worth keeping an eye on.

An “emerging industry of psychological surveillance” sounds ominous. Can you give some examples?

Firstly there are those which focus on our physical bodies in various way. Companies such as Affectiva and Realeyes seek to monitor emotions through facial scanning, and offer services to market research companies amongst others. It is rare (though not unheard of) for these technologies to be used without the consent of those being monitored, and consumer groups are mobilising against intrusive uses of such technologies. Wearable technologies, such as Fitbit and Apple Watch, are marketed as devices which benefit the wearer, through greater self-knowledge.

But there are emerging cases of employers making it mandatory to wear them, or health insurers offering lower premiums to those that wear them, because of the data they can gather about behaviour, stress and wellbeing. Humanyze is a company that seeks to track employee activity (including emotions) using wearable technology, while Virgin Pulse is an HR service that includes various tools (including wearable technologies) to keep track of an employee’s state of mind and health.

Secondly, there are ways of calculating emotional variations through our use of language. The field of ‘sentiment analysis’ involves teaching computers to recognise the emotions conveyed in a sentence, and can be put to use to monitor the general happiness level of twitter users, for example, or the spread of emotions amongst facebook users. It is also integral to social media-based market research, or the ‘people analytics’ used by employers to look at employee performance via analysis of email traffic. One company, Beyond Verbal, offers indications of emotion based on tone of voice when on the phone. This has various commercial applications.

The sociologist Nikolas Rose has charted how governments increasingly see individual psychology as part of their governmental responsibility. What role do the psychologists, mental health workers and the like, have in affecting this trend?

We have to be wary of exaggerating the powers of governments and businesses in this area. A lot of my book – like the work of Nikolas Rose on this topic– implicitly looks at the goals, measurement tools and strategies that policy-makers and managers have at their disposal. However, these can seem more effective (and potentially more sinister) than how things work in practice. One thing that sociologists such as Rose have stressed is that the process of ‘translation’ between a public policy (such as tackling depression in job centres) and the actual front-line intervention is long and tortuous, and there are various individuals and institutions along the way that can divert and subvert it, for better or worse.

Professionals working in psychiatry, clinical psychology and psychotherapy retain some power to influence how things play out. Since the 1970s, more quantitative, positivist traditions have come to the fore, which grant less autonomy to professional judgement, and rely more on things like questionnaires and standardised metrics. Naturally, that means that expertise potentially becomes more amenable to governmental co-option. And yet, especially in an area like mental health, the success or failure of a policy is ultimately in the hands of someone providing the care or the listening. It’s not clear that something like IAPT can succeed, even by its own yardstick, if it becomes ever-more integrated into the pursuit of ‘efficiency’ and benefit cuts.

Speaking as an outsider, it seems to me that there is still further scope for the ‘psy’ disciplines to offer coordinated alternatives, which aren’t merely resistant, but offer new policies across society. At present, government policy is driven by an economic rationality, combined with a reductionist, behaviorist notion of mental health. This approach is guilty of both over-medicalising social problems and over-economising policy solutions. A critical bio-psycho-social alternative should have things to say, not only about mental health services or welfare, but about the damage wrought elsewhere in society.

Look at our schools, for example: there is a crisis of stress and anxiety amongst teachers while pupils are suffering the mental strains of constant examination, no doubt justified on the back of some nonsense about Britain being in a ‘global race’. If politicians are serious about the pursuit of happiness and wellbeing, and don’t want those phenomena to be simply manufactured in a mechanised fashion, then the psy disciplines and professions might want to develop some blueprints for how labour markets or companies should be governed on that basis. I remain sceptical as to whether policy-makers do conceive of psychology as anything other than an economistic route to ‘behavior change’, but lets find out.

You can follow Will Davies on Twitter as @davies_will. There are more details of his book The Happiness Industry here.

This

This  Since 2002, hundreds of thousands of people around the world have logged onto a website run by Harvard University called

Since 2002, hundreds of thousands of people around the world have logged onto a website run by Harvard University called

Julie A. Woodzicka

Julie A. Woodzicka

The New York Times has an excellent

The New York Times has an excellent

I’ve often seen people on the web who advertise themselves as ‘fashion psychologists’ who say they can ‘match clothes to your personality’. I’ve always rolled my eyes and moved on.

I’ve often seen people on the web who advertise themselves as ‘fashion psychologists’ who say they can ‘match clothes to your personality’. I’ve always rolled my eyes and moved on.